Part of the 2026 AI Frontier Model War series. Part 1 maps all 22 models at a glance. Part 2 breaks down benchmark scores and deployment guidance. This post tells the story behind the numbers.

The spec sheets tell you what these models can do. They don’t tell you why OpenAI shipped a family of five models instead of one. They don’t explain why Anthropic’s largest model was deprecated six months after launch. They don’t capture the moment in January 2025 when a team in Hangzhou quietly uploaded two MIT-licensed models that the entire AI industry had to reckon with.

The 2026 AI frontier isn’t just a competition of benchmarks. It’s a competition of strategies, philosophies, and bets about what intelligence actually requires. Understanding who’s winning, and why, is more useful than memorizing AIME scores.

Here’s the story behind the models.

The Giants

OpenAI: The Company That Learned to Scale Everything

OpenAI entered 2025 as the unquestioned market leader and spent the year trying to figure out what to do with that position. The answer, it turned out, was proliferation.

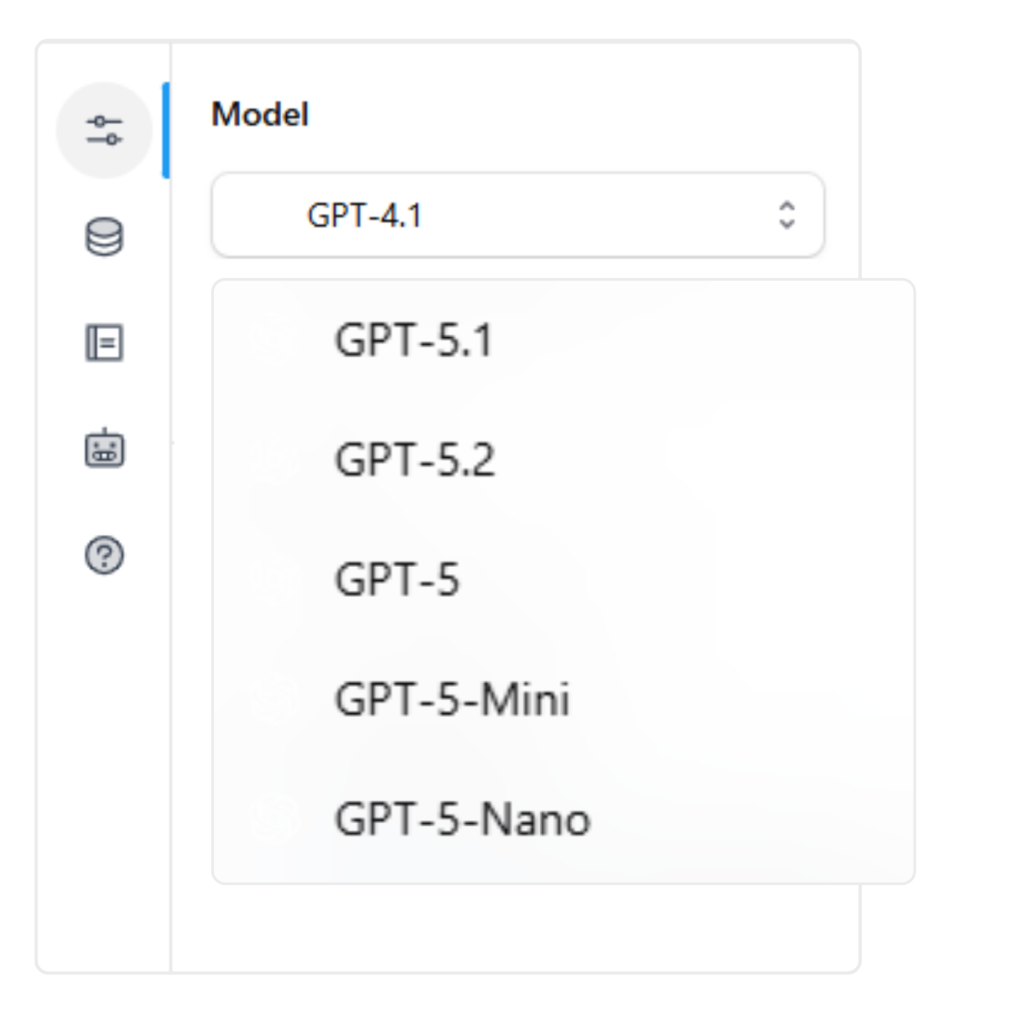

The GPT-5 family launch in August 2025 was strategically revealing. Instead of releasing one flagship model, OpenAI released three simultaneously, GPT-5, GPT-5 mini, and GPT-5 nano, at radically different price points and for radically different use cases. The message was deliberate: OpenAI is not a model company anymore. It’s a platform company with a model family, the same way Apple sells an iPhone lineup, not a phone.

The GPT-5 Family Breakdown

- GPT-5 nano ($0.05/M): Not a research experiment. A land-grab for volume, edge deployments, and high-frequency classification tasks that would have been cost-prohibitive six months earlier.

- GPT-5.1 (same price as GPT-5, better reasoning): An acknowledgment that ‘better’ means different things to different users, with warmer outputs and improved instruction-following

- GPT-5.2 (tiered inference: Instant, Thinking, Pro): The clearest signal yet that OpenAI believes the future of frontier AI is adaptive compute, not fixed-cost responses.

GPT-5.2 Key Stats

- 100% AIME 2025 and 92.4% GPQA Diamond

- Claimed 30% reduction in hallucination rates, the metric that matters most to enterprise deployment teams

- Variable pricing: production cost modeling is essential before deploying at scale

| Vulnerability Breadth creates complexity. With seven distinct OpenAI models now in active use, enterprise teams face meaningful cognitive overhead in deciding which model to route which workload to. This is exactly the kind of friction that multi-model platforms resolve, and it creates an opening for providers who offer simplicity on top of the OpenAI API. |

Anthropic vs OpenAI: How Claude’s Long Game Is Winning

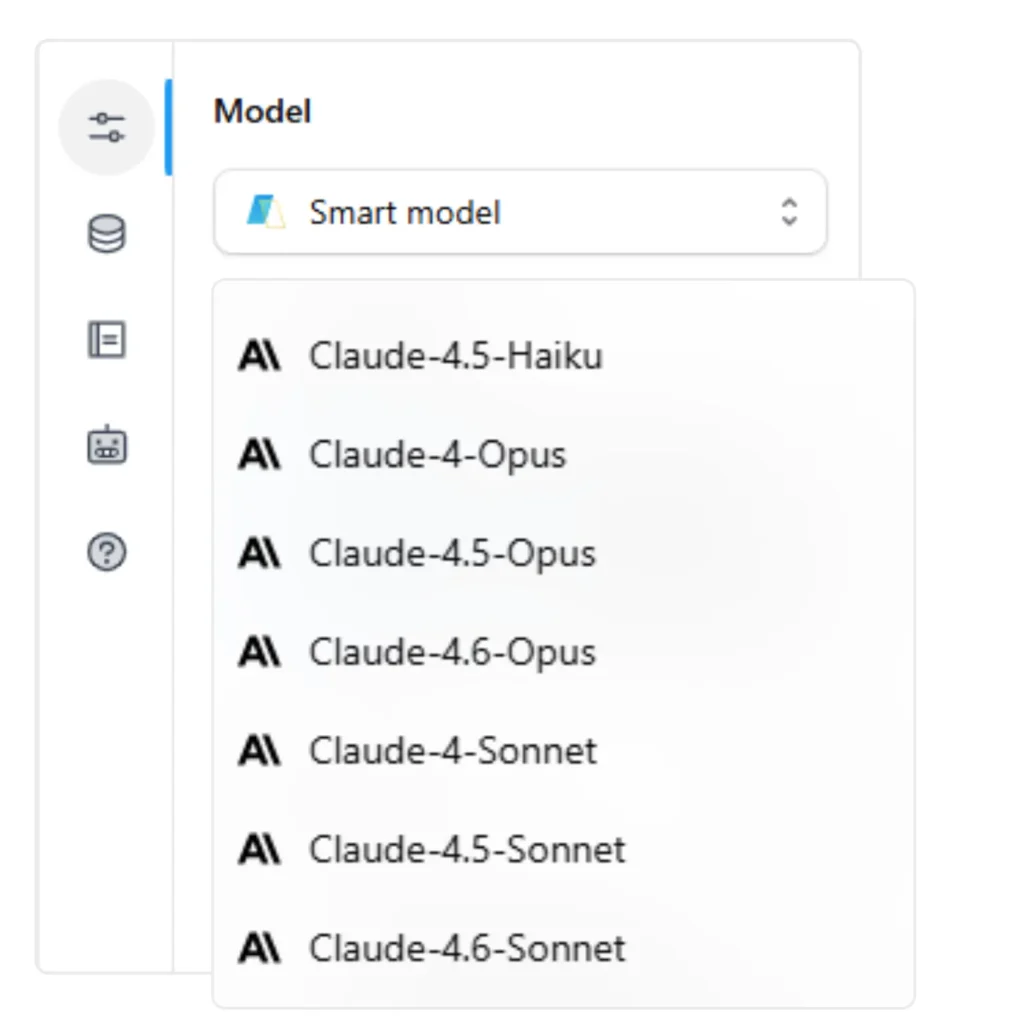

Anthropic’s 2025-2026 has been characterized by a paradox: a company that moves deliberately in an industry that rewards velocity. The deprecation of Claude 4 Opus in January 2026, just months after its launch at $15/$75 per million tokens, was jarring to customers who had built on it. But it was also a signal of how quickly the Claude family has iterated: by the time 4 Opus was deprecated, the 4.5 and 4.6 generation had rendered it economically indefensible.

Anthropic’s Enterprise Strategy

- While OpenAI has pursued mass-market ubiquity, Anthropic has built for the buyer willing to pay more for an AI less likely to cause a problem

- Constitutional AI, model cards, careful safety evaluations: these aren’t just ethics theater. They’re a business strategy targeting the procurement teams, legal departments, and security reviewers who stand between an AI tool and enterprise adoption

- Claude 4.5 Sonnet’s 77.2% SWE-bench Verified score and 30-hour autonomous sessions on an 11,000-line codebase prove this strategy can coexist with frontier capability

- Anthropic isn’t asking enterprise customers to accept a capability tradeoff for safety. It’s claiming there isn’t one

Claude 4.6 Opus: Adaptive Thinking

- Priced at $5/$25/M with a 1M context window, positioned for spec synthesis, RFP analysis, and long-form strategic output

- Selectively applies extended reasoning chains only when complexity warrants it, more efficient than always-on chain-of-thought

- Reasoning tokens are expensive; this architecture directly addresses that cost

| Vulnerability Anthropic’s pricing premium is defensible only as long as quality is demonstrably superior. As Claude 4.6 Sonnet becomes the default at $3/$15/M and challengers like Kimi K2 approach comparable quality at $0.60/$2.50/M, the premium narrative faces increasing pressure. |

Gemini vs ChatGPT: Google’s Comeback and the Preview That Changed Everything

Google’s AI journey in 2025 was a story of redemption followed by a genuine surprise. The early Gemini releases had faced public criticism for quality inconsistencies, a painful reputation hit for the company that invented the transformer architecture and trained the researchers who founded both OpenAI and Anthropic.

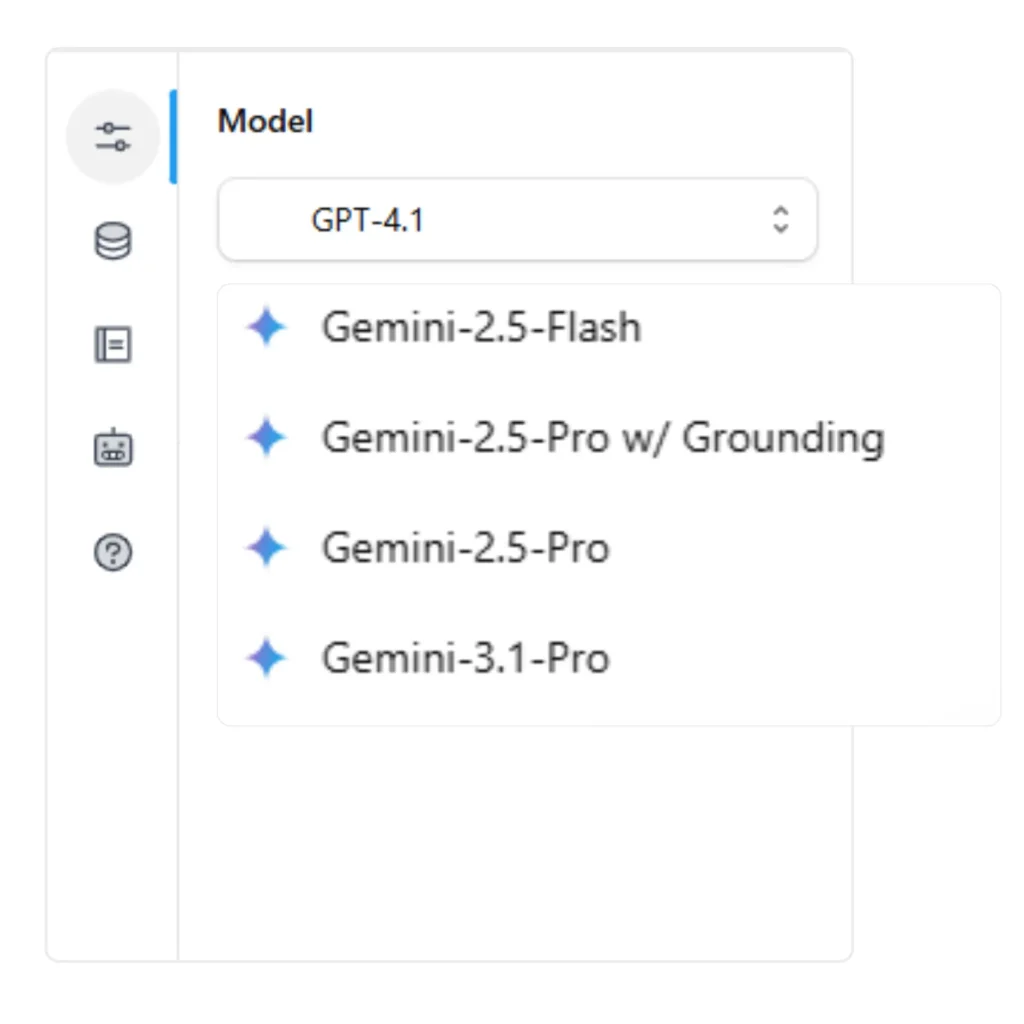

Gemini 2.5 Series

- Gemini 2.5 Flash: 232 tokens per second, 1M token context, $0.30/M

- Now one of the best-value models in the market and the default recommendation for high-volume, latency-sensitive workloads

- Repaired most of the reputation damage from early Gemini releases

Gemini 3.1 Pro Preview (February 19, 2026)

- 77.1% on ARC-AGI-2, the highest published score as of this writing

- ARC-AGI-2 was designed specifically to defeat models trained to pass benchmark tests; it requires novel reasoning on patterns that cannot be memorized from training data

- François Chollet, its creator, argues ARC-AGI-2 is the best available proxy for genuine general reasoning ability

- Google’s result is harder to explain away than AIME scores, which larger training sets can partially address

- Whether Gemini 3.1 Pro maintains that position when it exits preview is an open question

| Vulnerability Google’s AI business is structurally complex: Gemini models power consumer products (Google Search, Workspace) while also competing as an API product against OpenAI and Anthropic. That dual mandate creates internal tension about where to prioritize resources and which customers to serve first. |

The Challengers

DeepSeek: When the Assumptions Broke

January 20, 2025. DeepSeek uploads two models, V3 and R1, to Hugging Face with MIT licenses. Both perform at or near frontier quality on major benchmarks. Both are free to download, modify, and deploy commercially. The reaction from the AI industry was somewhere between disbelief and alarm.

What DeepSeek V3 Broke

- The cost floor: DeepSeek V3 at $0.27/M via API, or $0 if you self-host, forced every other provider to reckon with a free option that’s nearly as good.

- The compute assumption: The prevailing belief had been that frontier-quality models required frontier-level investment. DeepSeek V3’s efficient MoE design activates only 37 billion parameters per forward pass, demonstrating that architectural cleverness can substitute for raw compute at a level no one had publicly shown before.

- The licensing assumption: Not because open-source AI was new (Meta’s LLaMA series had established that), but because the quality was new. The MIT license on a near-frontier model changed the deployment calculus entirely.

DeepSeek R1: Reasoning at Open-Source Prices

- ~70% AIME 2025, competitive with where frontier labs were in mid-2024

- MIT licensed, free to self-host

- For regulated industries (healthcare, legal, financial services), the implications were immediate: data that cannot leave your infrastructure can now be processed by a model with genuine reasoning capability

- On-premise AI deployment no longer means accepting a significant capability sacrifice

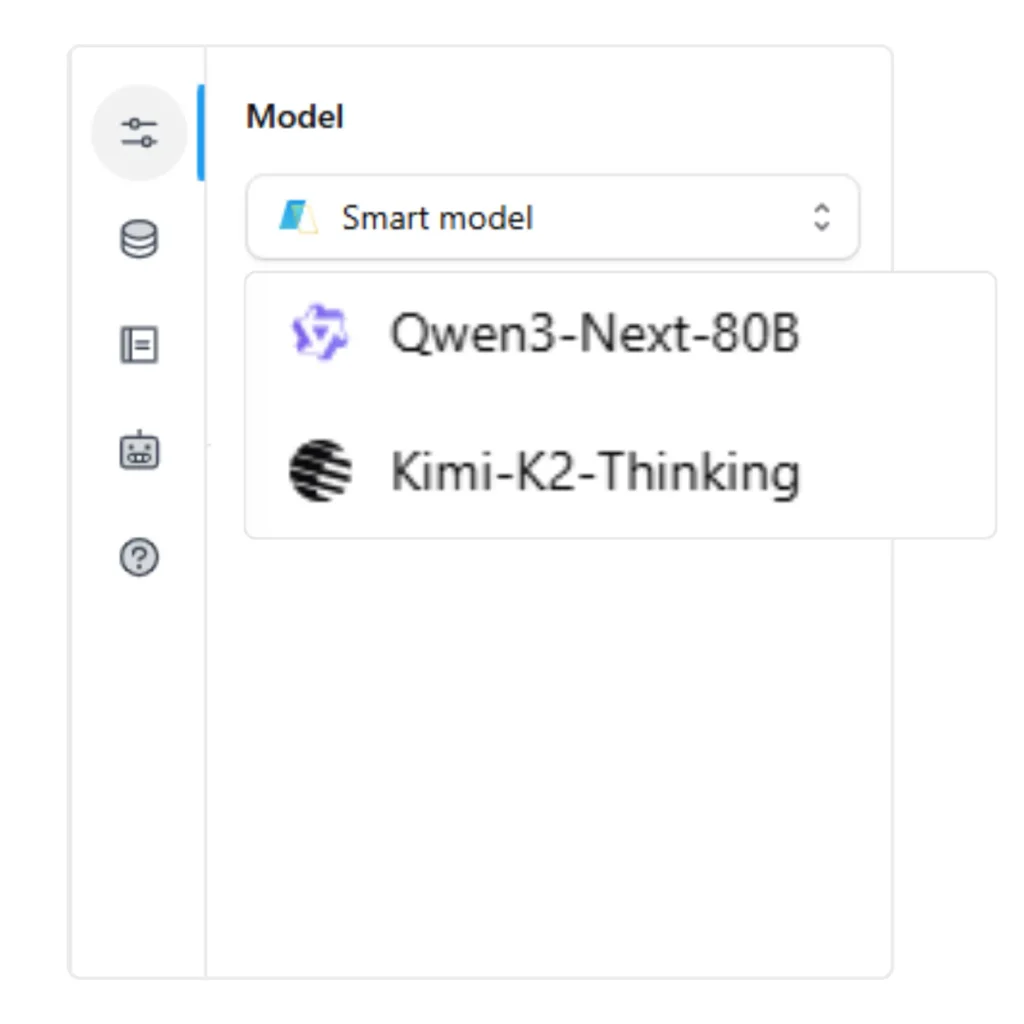

Kimi K2 Thinking: The Score That Nobody Expected

If DeepSeek was the January shock, Kimi K2 Thinking was the November one. Moonshot AI, a Beijing-based startup founded in 2023, released Kimi K2 Thinking on November 10, 2025, and the benchmark table it printed was not supposed to exist at that price point.

Kimi K2 Thinking: Benchmark Results

- 99.1% AIME 2025

- 84.5% GPQA Diamond

- 71.3% SWE-bench Verified

- 60.2% BrowseComp (vs. GPT-5’s 54.9%), making Kimi K2 the best published web browsing and research agent available at any price

- Price: $0.60/$2.50 per million tokens

Architecture

- 1-trillion-parameter mixture-of-experts model with 32 billion active parameters

- Same efficient design philosophy as DeepSeek V3, applied to a reasoning-specialized model

- 200-300 sequential tool calls per session, making it particularly relevant for long-horizon research workflows

Why BrowseComp Matters for Marketing Teams

BrowseComp measures an AI’s ability to find and synthesize information across live web pages, the core skill for competitive intelligence, market research, and content verification. At 60.2% vs. GPT-5’s 54.9%, Kimi K2 is not ‘almost as good as the expensive option.’ It’s better.

What it means for the pricing narrative: Kimi K2’s existence makes it very hard to justify paying $15/M for output tokens when a model at $2.50/M is matching or beating it on reasoning benchmarks. The challengers aren’t just closing the gap, in specific capability categories, they’ve crossed it.

| Pricing Implication Kimi K2’s existence makes it very hard to justify paying $15/M for output tokens when a model at $2.50/M is matching or beating it on reasoning benchmarks. The challengers aren’t just closing the gap. In specific capability categories, they’ve crossed it. |

Qwen3 Next 80B: Frontier on a Single GPU

Alibaba’s Qwen3 Next 80B is a different kind of challenger: not trying to beat GPT-5.2 on AIME, but trying to be the best model that fits on infrastructure you actually own.

Qwen3 Next 80B Specs

- 74.6% LiveCodeBench

- Apache 2.0 license

- Runs on a single high-end GPU

That combination, capable, free, and accessible without a cloud API dependency, addresses a specific and underserved segment: organizations that have the technical capability to run their own inference but can’t afford to staff a team to manage a 685B-parameter model like DeepSeek V3. An 80B model at Apache 2.0 licensing is a materially different deployment conversation than a 685B model.

For MSPs whose clients have data handling requirements but not dedicated ML infrastructure, Qwen3 80B represents a practical path to self-hosted AI that didn’t exist eighteen months ago.

Claude vs ChatGPT vs Gemini: What the 2026 Benchmarks Actually Tell You

The numbers from Part 2 of this series tell one story. The narrative context tells another.

On raw reasoning:

- GPT-5.2 has the best published AIME 2025 and GPQA Diamond scores

- Kimi K2 is close enough at a fraction of the price that for any cost-sensitive workload, GPT-5.2’s lead doesn’t justify the premium

- Gemini 3.1 Pro’s 77.1% ARC-AGI-2 is the most interesting result this cycle: that benchmark is specifically designed to be gaming-resistant, making Google’s score harder to explain away

On software engineering:

- Claude 4.5 Sonnet’s 77.2% SWE-bench Verified remains the top published single-model result

- The 30-hour autonomous session capability is as important as the score itself: real-world software work is an 8-hour coding session with dead ends, refactoring, and dependency debugging

- A model that can sustain coherent, purposeful work across that window is qualitatively different from one that answers a 2,000-token coding question correctly

On Cost: The Biggest Story of the Past Year

| Capability Tier | Best Model | Input $/M | 12 months ago |

| Top reasoning | GPT-5.2 | Varies | ~$60/M |

| Near-top reasoning | Kimi K2 | $0.60 | ~$30/M |

| Strong general | Claude 4.6 Sonnet | $3.00 | ~$15/M |

| High-volume capable | Gemini 2.5 Flash | $0.30 | ~$2/M |

| Free + self-hosted | DeepSeek V3 | $0.00 | ~$0 (LLaMA 3) |

The compression is real and ongoing. Teams building cost models on last year’s API pricing are likely overpaying by a factor of two to five.

What This Means for Your Team

For SaaS Marketing Teams

Research and Competitive Intelligence

- Kimi K2’s BrowseComp lead is a direct implication: if your team runs research workflows, tracks competitor updates, monitors industry news, or synthesizes market signals, evaluate Kimi K2 alongside or instead of your current default

- The quality-per-dollar ratio at $0.60/M is not a compromise. It’s currently the best available option for research-intensive tasks

GTM, Copy, and General Output

- Claude 4.6 Sonnet and GPT-5.1 remain the strongest general choices

- Claude reads as more precise and careful; GPT-5.1 reads as warmer and more conversational

- Both are excellent: run your actual use cases through both before committing

| The Pattern That Wins The teams winning with AI right now aren’t the ones who found the single best model. They’re the ones running a multi-model workspace where different tasks route to different models automatically, and where that routing is a configuration decision, not a standing engineering project. |

For MSPs and Agencies

The challenger landscape specifically changes the MSP calculus in two ways.

Self-Hosted AI Is Now Viable

- DeepSeek V3 and Qwen3 80B make self-hosted AI economically viable for clients who previously couldn’t justify it

- If you have clients in regulated industries waiting for on-premise AI that doesn’t require accepting a mediocre small model, that wait is largely over

Margin Structure Has Changed

- Kimi K2’s performance at $2.50/M output means you can now deliver top-tier reasoning output to clients at a cost structure that supports healthy margins

- When the best reasoning model for a given task costs a quarter of what it did a year ago, the economics of AI-as-a-service change significantly

The Infrastructure Layer

- Isolating each client’s environment, governing costs, and configuring model selection per workflow does not get simpler as more models enter the picture. It gets more complex.

- The MSPs scaling profitably have solved the infrastructure layer first: workspace isolation per client, custom agents configured to each client’s context, credit caps and alerts per workspace

- Optionally: the ability to embed AI directly into client products via an Embed SDK rather than shipping them to a third-party interface

The Verdict: No Single Winner, One Clear Pattern

- OpenAI has the broadest model family and the strongest brand

- Anthropic has the most defensible enterprise positioning and the coding crown

- Google has the most intriguing long-term reasoning result (Gemini 3.1 Pro ARC-AGI-2)

- DeepSeek changed the economics of the entire industry

- Kimi K2 Thinking printed benchmark scores that shouldn’t exist at that price

- Qwen3 Next 80B brought the frontier to a single GPU

The pattern across all of this is consistent: the moat that once protected frontier AI, compute, capital, talent, is narrowing faster than most people predicted. The challengers are not years behind. In some categories, they’re ahead.

For teams and organizations using these models, that’s good news. The era of ‘use ChatGPT for everything’ is over, replaced by a more interesting and more capable problem: matching the right model to each task in a workflow that you actually control.

The organizations doing that well aren’t the ones with access to the most expensive models. They’re the ones who’ve built the infrastructure to use all of them.

More in the 2026 AI Frontier Model War Series

Part 1: All 22 models at a glance and the five trends shaping 2026.

Part 2: Benchmark data and deployment guidance for marketing teams and MSPs.

This analysis reflects publicly available information as of March 2026. Model capabilities and pricing change frequently; verify current specifications with providers before production deployment.