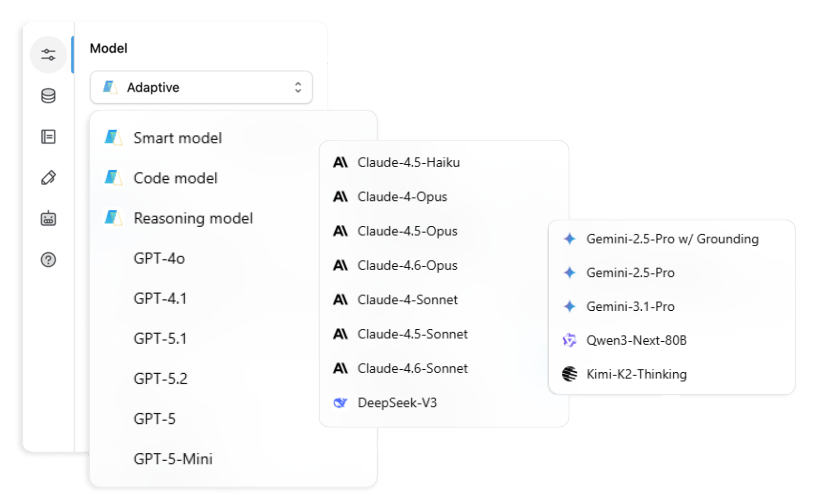

GPT-5, Claude 4 Sonnet, Gemini Flash, DeepSeek V3 and more: a practical comparison guide and per-workspace strategy for teams and MSPs.

The reasoning tier gets the headlines. Benchmark scores like 100% AIME 2025 and 92.4% GPQA Diamond are the numbers shared in newsletters and debated on LinkedIn. But if you mapped every AI interaction your team had last week, the vast majority weren’t reasoning tasks.

They were smart tasks:

- Writing a first draft

- Summarizing a meeting

- Replying to a customer

- Pulling key points from a document

- Generating social copy from a brief

This is the smart tier, general intelligence work that doesn’t require a model to deliberate for twenty minutes before responding. It’s also where most of your AI spending goes, where your team’s daily productivity either compounds or stalls, and where the wrong default model quietly costs you more than any benchmark comparison would suggest.

This guide covers:

- The eight models that matter in the smart tier, plus one notable alternative

- A practical decision framework for choosing between them

- The per-workspace strategy most teams aren’t running but should be

What Counts as a Smart Task

Smart tasks share a few key characteristics:

- They’re well-defined with a clear output format

- They don’t require working through a genuinely novel problem

- They require fluency, accuracy and speed, not extended deliberation

In practice, smart tasks include:

- Writing and editing copy across formats

- Summarizing meetings and documents

- Answering questions from a knowledge base

- Generating customer-facing communication

- Transforming content from one format to another (transcript to blog post, spec sheet to FAQ, call notes to CRM entry)

- Light analysis, like reading a CSV and flagging what’s worth looking at

Smart tasks are not multi-step reasoning chains, novel mathematical problems, complex code generation, or tasks requiring extended autonomous execution. Those belong in the reasoning and code tiers. The models built for those jobs are slower, more expensive and often overkill for work that makes up the majority of most teams’ AI usage.

Getting your smart tier right matters more than getting your reasoning tier right, because you’re in it all day, every day.

Quick Comparison: All 8 Models at a Glance

| Model | Input ($/M tokens) | Output ($/M tokens) | Context Window | Best For |

|---|---|---|---|---|

| GPT-5.1 | $1.25 | $10.00 | 400K | Brand voice, customer-facing copy |

| GPT-5 mini | $0.25 | $2.00 | 400K | High-volume drafts, automated pipelines |

| GPT-5 nano | $0.05 | $0.40 | 400K | Classification, routing, edge deployments |

| Claude 4.6 Sonnet | $3.00 | $15.00 | 1M (beta) | Complex docs, multi-constraint tasks |

| Claude 4.5 Haiku | $1.00 | $5.00 | 200K | Volume tasks, Anthropic-native workflows |

| Gemini 2.5 Flash | $0.30 | $2.50 | 1M | Real-time generation, high-speed pipelines |

| Gemini 2.5 Pro + Grounding | $1.25 | $10.00 | 1M | Live intelligence, competitive research |

| DeepSeek V3 | $0.27 (API) / Free (self-hosted) | $1.10 | 128K | Budget deployments, data sovereignty |

Pricing and specifications current as of March 2026. Verify with providers before production deployment.

The Contenders

GPT-5.1 Review: OpenAI’s Best AI Model for Writing and Brand Voice

1.25/1.25/10.00 per million tokens · 400K context

GPT-5 mini Review: The Best AI Model for High-Volume Content Generation

0.25/0.25/2.00 per million tokens · 400K context

GPT-5 nano Review: Classification, Routing and Edge Deployments

0.05/0.05/0.40 per million tokens · 400K context

Claude 4.6 Sonnet Review: Anthropic’s Best AI Model for Complex Business Tasks

3.00/3.00/15.00 per million tokens · 1M context (beta)

Claude 4.5 Haiku Review: High-Quality Budget Option from Anthropic

~1.00/1.00/5.00 per million tokens · 200K context

Gemini 2.5 Flash Review: The Fastest AI Model for Real-Time Workflows

0.30/0.30/2.50 per million tokens · 232 tokens/sec · 1M context

Gemini 2.5 Pro + Grounding Review: Live Intelligence for Competitive Research

1.25/1.25/10.00 per million tokens · 1M context

DeepSeek V3 Review: Best Open-Source AI Model for Budget and Self-Hosted Deployments

0.27/0.27/1.10 per million tokens via API · Free self-hosted · 128K context · MIT license

Also Worth Noting: Qwen3 Next 80B

For regulated-industry or government deployments requiring on-premise hosting, Qwen3 Next 80B is an alternative to DeepSeek V3. It offers comparable self-hosted capability with a similar open-weight architecture. It’s not covered in depth in this guide, but if your sovereignty requirements rule out all cloud-hosted options, it’s worth evaluating alongside DeepSeek V3.

How to Choose: 6 Questions to Find the Right Model

These six questions will narrow the field faster than any feature matrix.

The Per-Workspace Strategy

Most teams leave real productivity and cost savings on the table, not in their model selection, but in their model uniformity.

One model, set as the global default, applied identically to every workflow, every team, every use case. It’s the path of least resistance and it’s consistently suboptimal. The cost difference between a thoughtful per-workspace model policy and a universal default is often 40 to 60% on inference spend.

A per-workspace model strategy means matching the default to the job of the workspace, not the preference of whoever configured it last.

Here’s what it looks like in practice:

Brand and content workspace: GPT-5.1 as default

- The tone-sensitivity justifies the $1.25/M input cost over mini

- Writers get better first drafts, editors make fewer changes

- The workflow runs faster even though the model is more expensive, because fewer revision cycles means less total time spent

Support and customer communication workspace: Claude 4.5 Haiku for volume, Claude 4.6 Sonnet for escalations

- Haiku handles the standard queue at a third of Sonnet’s price

- Sonnet handles complex or sensitive cases where nuanced judgment matters

- Most support teams don’t need the premium model on every ticket, they need it on the right ones

Competitive intelligence workspace: Gemini 2.5 Pro + Grounding exclusively

- Without live search, you’re analyzing the market as it existed at the model’s training cutoff

- With it, you’re analyzing the market as it exists this week

- For competitive monitoring, this distinction is the entire value of the workflow

High-volume automation workspace: Gemini Flash or GPT-5 mini

- These outputs feed into other processes and aren’t customer-facing

- Speed and cost are the metrics that matter, not polish

For MSPs: per-client workspace configuration

- A law firm client gets a different model default than a DTC ecommerce client

- The law firm may require self-hosted DeepSeek V3 for data sovereignty

- The DTC brand needs Flash for high-volume social generation and GPT-5.1 for brand-voice content

- A single model policy across all client workspaces is the wrong architecture, not because any individual model is inadequate, but because the clients are different

The quality difference, measured in editing time and revision cycles, is harder to quantify but consistently real.

Putting It Together

The smart tier isn’t glamorous. It doesn’t generate benchmark headlines or launch-day coverage. But it’s where the compounding value of AI in a business actually lives:

- The daily drafts that go out faster

- The weekly summaries that take minutes instead of an hour

- The customer replies that require less editing

- The competitive brief that gets to the team before the window closes

Choosing the right model for the right task, and configuring it consistently across the workspaces where that work happens, is the operational discipline that separates teams running AI as a productivity multiplier from teams running it as a slightly better search engine.

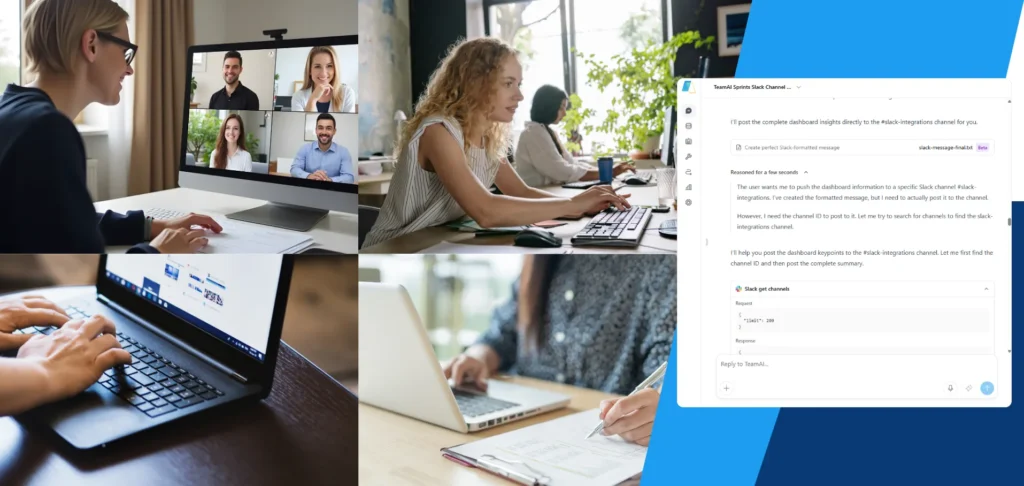

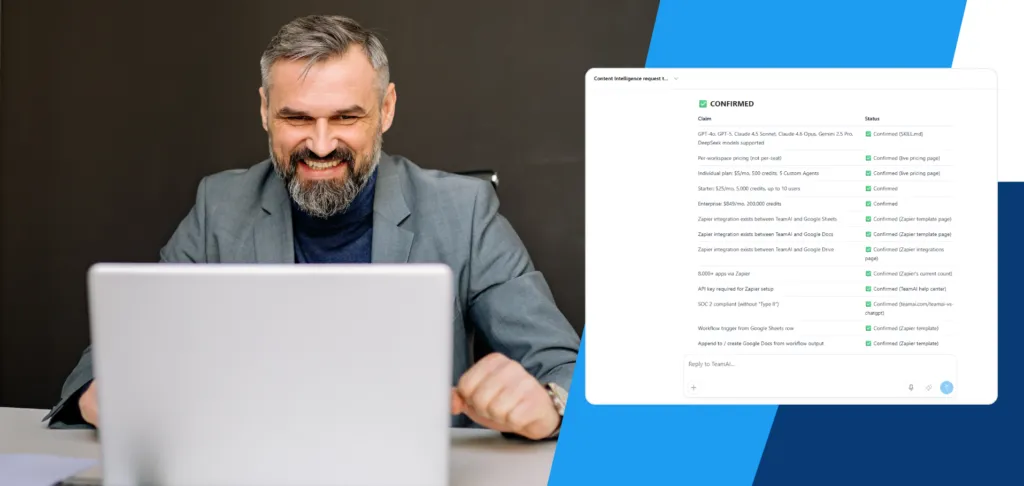

Most teams don’t want to revisit this decision every quarter or manage a spreadsheet of model-to-task mappings. TeamAI is built for exactly this. You set the model policy per workspace once, and the routing, cost controls and usage alerts run from there. The free plan is a reasonable place to start. Most teams that configure even one workspace properly find the logic extends naturally to the rest.

Get started with TeamAI →Frequently Asked Questions

Which AI model is best for writing in 2026?

GPT-5.1 is the top choice for writing tasks where tone and brand voice matter. It produces copy that requires less editing to reach publishable quality. For high-volume writing at lower cost, GPT-5 mini is the practical alternative, especially in workflows with a human review stage.

Claude vs GPT: which is better for business?

It depends on the task. GPT-5.1 leads on tone-sensitive writing and conversational copy. Claude 4.6 Sonnet leads on complex, multi-constraint tasks, long documents and outputs requiring careful judgment. For most businesses, the right answer is both, used in different workspaces for different jobs.

What is the best AI for content creation in 2026?

For brand-driven content creation, GPT-5.1 or Claude 4.6 Sonnet. For high-volume content pipelines, GPT-5 mini or Gemini 2.5 Flash. The best model depends on whether you’re optimizing for quality, volume or cost. See the per-workspace strategy section above for how to run both.

What is the cheapest capable AI model?

DeepSeek V3 at $0.27/M input (or free self-hosted) is the most cost-competitive capable option. GPT-5 nano at $0.05/M is cheaper but suited to classification and routing tasks rather than general writing. Gemini 2.5 Flash at $0.30/M is the cheapest option with a 1M context window and production-grade speed.

Can I use different AI models per workspace?

Yes, and you should. A per-workspace model strategy, matching the default model to the job of that workspace rather than using one universal default, typically reduces inference spend by 40 to 60% while improving output quality in tone-sensitive workflows. TeamAI supports per-workspace model configuration out of the box.

Gemini Flash vs GPT-5 mini: which should I use?

Gemini 2.5 Flash is faster (232 tokens per second), cheaper ($0.30/M vs $0.25/M input), and comes with a 1M context window versus 400K. GPT-5 mini has a slight edge on writing quality and may integrate more naturally into existing OpenAI-based workflows. For pure volume and speed, Flash wins. For output quality in OpenAI-native pipelines, mini is the better fit.

What is the best AI model for customer support?

Claude 4.5 Haiku for standard volume tickets, Claude 4.6 Sonnet for complex or sensitive escalations. This tiered approach captures roughly 90% of Sonnet’s quality on most tickets at a third of the cost. Running Sonnet on every ticket is unnecessary and expensive for most support teams.

Is DeepSeek V3 safe to use for business?

DeepSeek V3 is MIT licensed, meaning it can be fully self-hosted with no data leaving your infrastructure. For cloud-hosted API usage, apply the same data handling policies you would to any third-party AI provider. For regulated industries with hard data sovereignty requirements, the self-hosted deployment is the recommended path.