The verdict, who leads in each capability tier and what that signals for the next 12 months

2026 AI Blog Series · Top-of-Funnel

The mistake most people make when following the AI race is looking for one winner. There isn’t one, not in 2026. There are six competitions happening simultaneously, and a different organization is leading each of them.

A single benchmark score tells you almost nothing useful. Here’s why:

- GPT-5.2 scores 100% on AIME 2025, and so does Claude 4.5 Sonnet, because AIME 2025 is now a saturated test that top models have ceilinged out on.

- Kimi K2 Thinking, a model most people haven’t heard of, matches or beats both of them on three other benchmarks at a fraction of the cost.

- None of that tells you which model writes better GTM briefs or manages a 30-hour autonomous workflow without going off-rails.

Understanding who leads which tier, and what that means for the next twelve months, is more useful than any leaderboard snapshot. Here’s our verdict.

Tier 1: Reasoning Depth

Leader: OpenAI (GPT-5.2) and Kimi K2 Thinking

GPT-5.2

- 100% AIME 2025 and 92.4% GPQA Diamond

- Claimed 30% reduction in hallucinations versus GPT-5

- Tiered inference system: Instant, Thinking, and Pro modes, the most flexible reasoning architecture any provider has shipped

- Variable pricing is the catch; production cost modeling is essential before deploying at scale

Kimi K2 Thinking (Moonshot AI)

- 99.1% AIME 2025 and 84.5% GPQA Diamond

- Leads the field on BrowseComp, a benchmark designed to measure long-horizon web research

- Priced at $0.60/M input, making it the most cost-efficient capable reasoner available today

Claude 4.6, Gemini 2.5 Pro, GPT-4.1, and Kimi K2 all offer million-token context windows. The bottleneck has shifted:

• No longer: can it see the whole document?

• Now: does it actually attend to all of it intelligently?

• For marketing teams: AI can hold your entire brand guide, competitive landscape, and launch brief simultaneously in a single session

• For MSPs: operate across complex multi-document client environments without chunking workarounds

Tier 2: Autonomous Agents and Agentic AI Tools

Leader: Anthropic

This isn’t close. Claude 4.5 Sonnet’s 30-hour autonomous runs, 11,000-line codebase handling, and SWE-bench Verified score of 77.2% represent a capability level no other model in production has matched. Key metrics:

- 30-hour autonomous runs without human intervention

- 11,000-line codebase handling

- SWE-bench Verified score of 77.2%

- Kimi K2’s 200-300 sequential tool calls per session makes it a strong second for research-heavy agentic workflows

For marketing teams, this is the most practically meaningful tier. An agent that can run a 30-hour pipeline, pulling competitor data, drafting a competitive brief, updating a support macro, and posting a Slack summary, without human intervention at each step is a different category of tool than a chatbot. The operational implication isn’t just efficiency. It’s the ability to run workflows that previously required a human to stay in the loop.

30-hour single-agent sessions are today’s ceiling. The next threshold is multi-agent coordination: multiple specialized agents working in parallel, handing off tasks to each other, managed by an orchestrator. This is partially solved in research settings but not reliably in production. Whoever ships dependable multi-agent pipelines at the enterprise level will define the agent tier heading into 2027. Anthropic is the most likely candidate, but has not yet delivered.

Tier 3: Cost Efficiency

Leader: DeepSeek and Google

The cost floor has dropped so far that it’s changed the fundamental question teams ask. Current production pricing:

- GPT-5 nano: $0.05/M input

- DeepSeek V3: $0.27/M, or free to self-host under MIT license

- Gemini 2.5 Flash: $0.30/M delivering 232 tokens per second

Eighteen months ago, these numbers would have described a future state. Today they’re production pricing. The question is no longer ‘can we afford to use AI for this?’ It’s ‘is it worth routing this task to a more expensive model, and what does the output quality difference actually justify?’

For MSPs specifically, this is the most commercially significant development in the landscape. The margin gap between what clients will pay for AI-enabled services and what inference actually costs has widened dramatically. A tiered model policy, cheap defaults for volume tasks and premium models for final outputs, is now a real operational lever with meaningful P&L impact.

Sub-$0.10/M capable inference will be standard across major providers by Q4 2026. The cost compression story is not done. Expect DeepSeek and Google to push further; expect OpenAI and Anthropic to respond on their smaller models. This benefits SMBs and MSPs serving price-sensitive clients more than anyone: organizations that couldn’t justify AI costs eighteen months ago will be able to by the end of the year.

Tier 4: Long Context and Memory

Leader: Anthropic and Google (tied)

Claude 4.6 Opus, Claude 4.6 Sonnet, Gemini 2.5 Pro, and GPT-4.1 all offer million-token context windows. The window size race is effectively over. Every major provider has cleared the bar.

The real gap is no longer how much models can see, but how intelligently they attend to what’s in front of them:

- A model with a 1M token window that loses track of a critical detail from section 3 by the time it’s writing section 12 is not meaningfully more useful than a model with a 200K window that reads carefully.

- Anthropic’s 4.6 generation introduced ‘adaptive thinking,’ extended reasoning chains applied selectively based on task complexity, one credible architectural approach to this problem.

- Google’s Gemini 2.5 Pro has demonstrated strong retrieval accuracy at scale.

- Neither has definitively solved intelligent in-context retrieval.

Context window size will stop appearing in marketing copy as a differentiator by mid-2026. The competition shifts entirely to intelligent retrieval within large contexts: which model can accurately surface the right detail from a million-token document without being explicitly prompted to look for it. This is an unsolved problem with massive enterprise implications for knowledge management, legal review, and long-form research.

Tier 5: Code and Engineering: The Best AI for Coding in 2026

Leader: Anthropic

Claude 4.5 Sonnet holds the published SWE-bench Verified record at 77.2%. Claude 4.5 Opus exceeds it (Anthropic hasn’t disclosed the exact figure, but has confirmed it surpasses Sonnet’s score). SWE-bench tests real GitHub issues, not toy problems, making it the most practically meaningful benchmark for engineering teams and marketing technology builders working on integrations, automation pipelines, or custom agents.

What makes Anthropic’s lead durable here is the combination of code quality and autonomous session length:

- A model that can write good code in a single pass is useful.

- A model that can write good code, run into an error, debug it, revise the approach, and continue autonomously over hours is a different category of tool entirely.

SWE-bench is being optimized against, and its signal quality will degrade. The real benchmark in 2026-2027 will shift to production-environment tasks: debugging live systems, autonomous PR submission, and integration testing in real codebases with real dependencies. These tasks require contextual judgment that can’t be trained away on a leaderboard. Claude’s architectural advantage in long autonomous sessions gives it a structural head start, but this race is still open.

Tier 6: Open Source AI in 2026

Leader: DeepSeek

DeepSeek has built the strongest open-source AI stack available today. Key highlights:

- DeepSeek V3: 685B parameter mixture-of-experts model, activates only 37B parameters per inference pass, MIT license, free to self-host

- DeepSeek R1: Extends the story into reasoning, achieving roughly 70% on AIME 2025, competitive with proprietary reasoning models from mid-2024, also MIT licensed

- Qwen3 Next 80B: Runs on a single high-end GPU under Apache 2.0, scores 74.6% on LiveCodeBench

Twelve months ago, the argument for self-hosting was “sovereignty at the cost of capability.” That argument no longer holds. The capability sacrifice is

The open-source tier will have a model competitive with today’s top proprietary reasoning leaders by Q4 2026. The constraint is no longer model capability, it’s organizational capacity. Do you have the DevOps infrastructure to run and maintain your own inference stack? That gap is closing too, as managed self-hosting services mature. By 2027, ‘we use a closed model’ will need a justification in the same way ‘we use on-premise servers’ needs a justification today.

The Meta-Verdict: OpenAI vs Anthropic vs Google — Who’s Winning the War Itself?

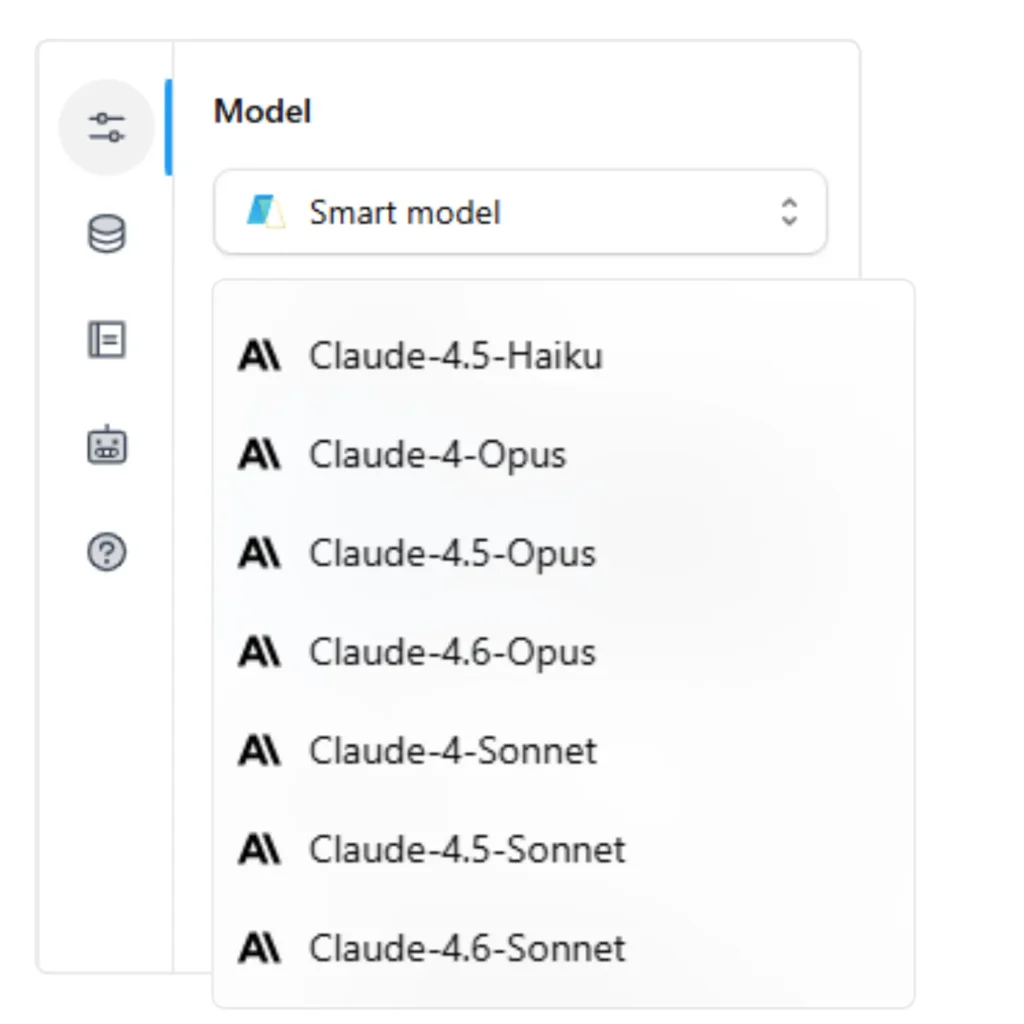

Six tiers, five providers, no clean sweep. But if you had to name the organization with the most durable strategic position heading into 2027, the honest answer is Anthropic.

Anthropic

- Leads agents and leads code

- Strongest long-context roadmap; the 4.6 generation’s ‘adaptive thinking’ architecture is built for practical deployment rather than leaderboard position

- The 4.5-4.6 model cadence is coherent in a way that OpenAI’s sprawling GPT-5 family currently is not

- Coherence matters when organizations are trying to build stable, reproducible workflows on top of a model

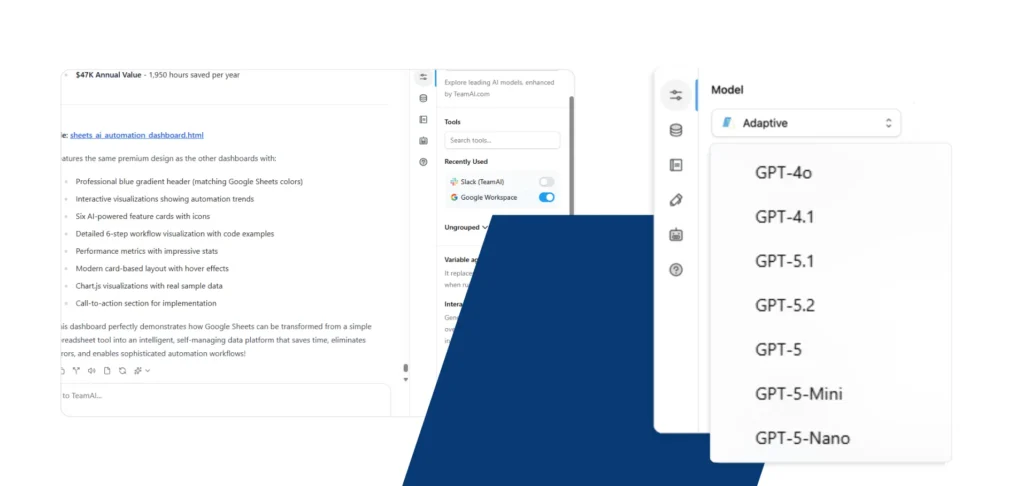

OpenAI

- Retains the largest installed base and the broadest product surface

- GPT-5.2’s benchmark dominance is real, and its tiered inference system is genuinely innovative

- Sprawl has a cost: seven GPT-5 variants with different pricing models and context windows creates cognitive overhead and decision friction

- Anthropic’s cleaner lineup reduces that friction, and in enterprise sales that matters more than an extra percentage point on a benchmark

- Infrastructure advantage no one else can replicate: Gemini 2.5 Flash’s 232 tokens-per-second throughput is a product of TPU scale unavailable to any other competitor

- Gemini 3.1 Pro’s 77.1% ARC-AGI-2 score is the most credible novel-reasoning result published this cycle

- Recurring risk: impressive capability releases that take months longer than expected to reach production readiness

- If Gemini 3.1 Pro ships out of preview cleanly in Q2, the competitive picture changes materially

DeepSeek

- Already won something no one else competed for: by open-sourcing frontier-class models under permissive licenses, they’ve compressed industry pricing by an order of magnitude

- Made self-hosting viable for the first time at frontier quality

- May not win the capability race outright, but has changed the economics of the entire market

- Every competitor’s pricing decisions now have to account for a free option that’s nearly as good. That’s a durable strategic accomplishment.

What This Means For You

If you’re running a marketing team

The verdict across six tiers points to one practical conclusion, multi-model isn’t a technical preference, it’s the correct strategy. The teams doing this well in 2026 are routing intelligently:

- Claude 4.5 Sonnet for long agentic runs that require sustained judgment

- GPT-5 mini for high-volume first drafts where cost matters more than perfection

- Gemini 2.5 Pro with Grounding anywhere you need live competitive intelligence

- Gemini Flash for Slack summaries and quick turnarounds that don’t justify a premium model

The framing shift that matters

Stop thinking about which AI your team uses, and start thinking about which model handles which task. Those are different questions with different answers.

If you’re an MSP

The cost efficiency tier verdict is the one with the most direct commercial implication for you. Key points:

- The margin gap between what clients will pay for AI-enabled services and what inference actually costs has never been wider

- A tiered model policy, cheap defaults for standard volume tasks and premium models for final-draft quality or complex reasoning, isn’t just cost optimization; it’s the service architecture that lets you offer meaningfully different tiers to clients without building separate infrastructure for each one

- The organizations building durable AI revenue in 2026 are selling the orchestration layer, not the model access. That’s a different and more defensible business.

Most teams don’t want to manage five separate model relationships to access five category leaders. TeamAI lets you run the right model for each workflow from one workspace, routing, cost controls, and agent infrastructure already built in.

Start TodayGo Deeper

This post is part of the 2026 AI Blog Series. If the verdict raised questions about the underlying data or how to act on it, the other two posts in the series go further:

Part 1: The 2026 AI Frontier Model War: The full 22-model landscape, pricing table, and the five macro trends reshaping how organizations should think about AI selection.

Part 2: Benchmarks and Who Should Use What: Provider-by-provider analysis, the full head-to-head benchmark table, and a practical deployment guide for marketing teams and MSPs, including the model configurations that cut inference costs by 60–70% without meaningful quality loss.

This analysis reflects publicly available information as of March 2026. Model capabilities and pricing change frequently, verify current specifications with providers before production deployment.